Last year I was at a conference when a peer leaned over and described something that – as an HR leader – stopped me cold. It wasn't a one-off story about a bad hire. It was a coordinated, scalable fraud operation. What I heard that day has only become more relevant since. So let's talk about it.

This isn't resume padding

When most people hear "fake job applicant," they picture someone exaggerating their credentials or listing skills they don't quite have.

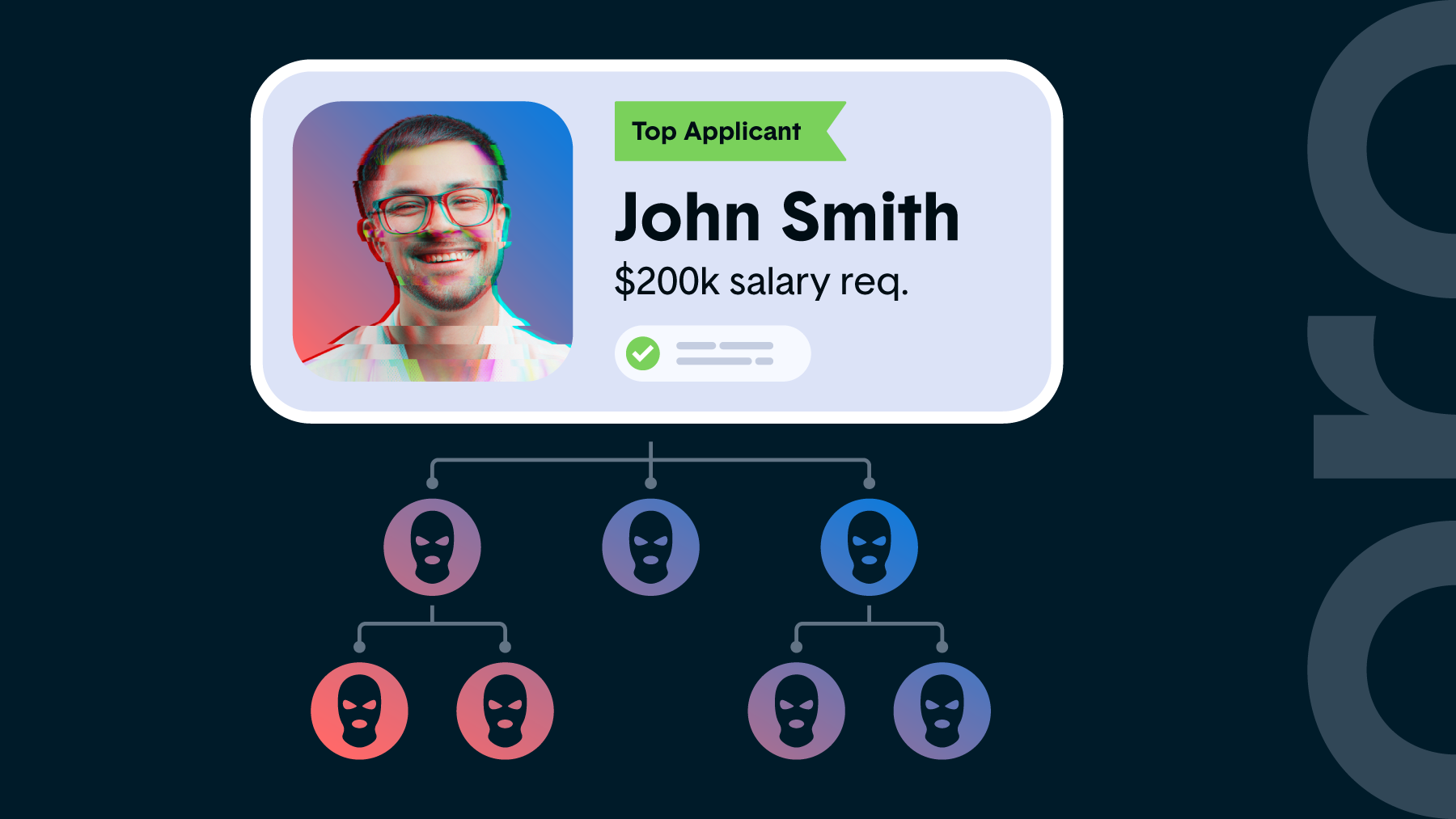

That's not what we're dealing with here. What's happening now involves organized teams running sophisticated fraud operations with a single goal: land a U.S.-based engineering role, collect a paycheck, and disappear before anyone notices.

How it works is that AI submits applications at scale across dozens of companies simultaneously. When a screening interview is requested, a separate group that's practiced and prepared for technical assessments takes over. Video interviews go to yet another team. References are fabricated and covered by dedicated people who know exactly what to say. Once an offer is accepted, a rotating cast shows up to post-hire meetings just long enough to maintain the illusion.

The economics make it worth their while

The math here is what makes this so alarming. A $200K engineering salary paid over 90 days works out to roughly $50,000. Most companies have a 90-day probationary window for new hires, which these operations know and exploit. Before termination kicks in, they've collected their payout and moved on.

Scale that to 10 companies running simultaneously and you're looking at a $500,000 annual operation. This process also carries far less legal risk than most other forms of fraud, is difficult to trace, and is alarmingly easy to repeat.

Peers at both large enterprises and early-stage startups tell me they've been hit, so this isn't a niche problem. It's becoming a cost of doing business in tech hiring.

The uncomfortable truth

The tools available to bad actors are currently ahead of what most companies have to detect them. AI-generated applications are indistinguishable from human ones at scale. Deepfake technology has made video verification unreliable. Reference checks, already a weak signal, are now easily spoofed. And the speed of modern hiring, which is driven by pressure to fill roles fast, creates exactly the conditions these operations need to slip through.

To be clear, this is not an indictment of hiring teams, and most companies I've spoken with are actively working on this problem. Some have gone as far as paying for sponsored LinkedIn ads telling candidates not to apply anywhere except their official careers page, because fake recruiter scams are running alongside fake applicant scams, and both are identity theft at scale.

The challenge is that you're often fighting something you can't fully see until it's already happened.

What companies can do right now

The technology gap is closing, and there are some genuinely promising detection solutions emerging. But while you're waiting for the tooling to catch up, there are practical steps worth taking today.

- Verify identity deliberately. Add a step that's harder to fake, such as a live, unscheduled video call early in the process, a request to share their screen during an assessment, or an in-person component if your role allows for it.

- Triangulate signals. One interview is a data point, so multiple interviewers across multiple formats – and ideally across multiple days – make it significantly harder to coordinate a team-based deception.

- Treat references differently to legacy processes. Don't just call the numbers provided, but instead find references independently through LinkedIn or mutual connections. Ask specific, unpredictable questions that a coached third party would struggle to answer convincingly.

- Flag inconsistencies early. Timezone mismatches, unusual lag during video calls, reluctance to turn on a camera, or equipment that seems inconsistent with the role are all worth noting and following up on.

- Move fast on termination when something feels off. If post-hire behavior raises flags, such as vague responses, missed deliverables, or unusual access patterns, trust your instincts and act quickly. The 90-day window is a known target.

Upfront identity verification is a critical first step

Compliance and security teams have been sounding alarms about fraud for years. What's new is that hiring is now a direct attack surface.

As people leaders, we need to treat identity verification with the same rigor we apply to background checks. The candidates who make it through your process are getting access to your systems, your data, and your team. That access has value, and organized actors know it.

The good news is that awareness is growing, detection tools are improving, and the industry is starting to share intelligence on this more openly. The companies that get ahead of it now will be better positioned when this becomes an even more common topic of conversation. And based on what I'm seeing, that conversation is coming sooner than most people expect.

Related Posts

Stay connected

Subscribe to receive new blog articles and updates from Thoropass in your inbox.

Want to join our team?

Help Thoropass ensure that compliance never gets in the way of innovation.

.png)